Nvidia, a forerunner in the field of AI and GPU technology, is once again making headlines with its recent initiative to open source significant components of the Run:ai platform. Leading the charge is the KAI Scheduler, introduced as a Kubernetes-native GPU scheduling solution. This shift not only aligns Nvidia with the growing trend towards open source but fortifies its commitment to enhancing enterprise-level AI infrastructure. By making the KAI Scheduler available under the Apache 2.0 license, Nvidia is opening the doors for collaboration, innovation, and contribution from the community. This move transcends just a technical upgrade; it symbolizes Nvidia’s deeper strategy to cultivate an active ecosystem around AI technology.

Transforming AI Workload Management

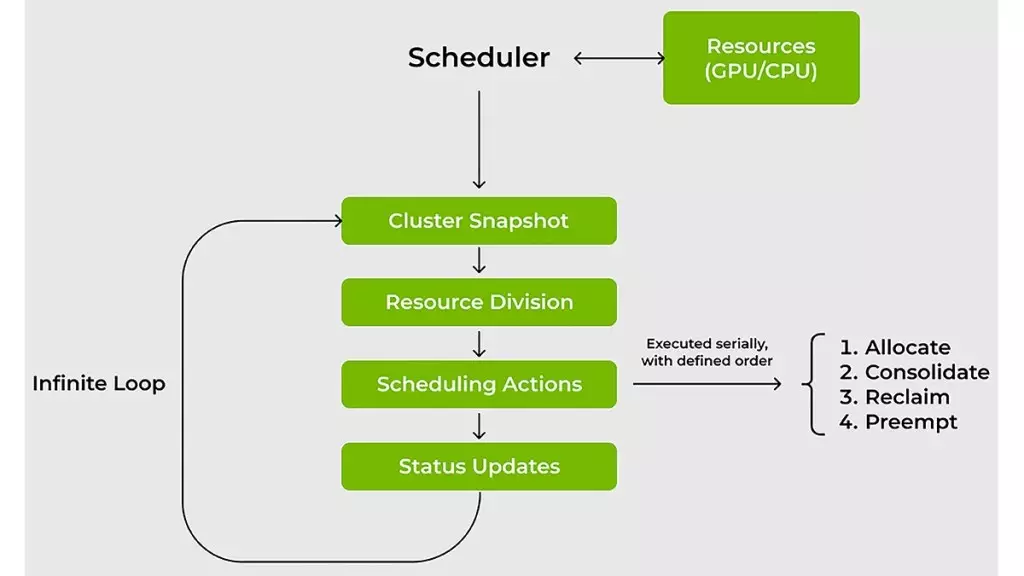

At the crux of this offering lies the KAI Scheduler itself, designed to tackle the hurdles that conventional resource schedulers often stumble upon. As enterprises increasingly adopt AI solutions, the imbalances in demand for GPU and CPU resources can lead to inefficiencies that stifle productivity. KAI Scheduler rises to meet this challenge, equipped with features that manage dynamic workloads effectively. Traditional schedulers generally fail to adapt to the rapidly shifting requirements of AI tasks – today, one might require a single GPU for exploratory data analysis, while tomorrow could bring the need for multiple GPUs for extensive distributed training. KAI Scheduler’s impressive capability to continuously analyze workload demands allows it to recalibrate allocations in real-time, reducing wait times and enhancing availability.

The Symbiosis of Fairness and Efficiency

Time is a critical asset for machine learning engineers, and the KAI Scheduler’s design embodies this urgency. By adopting a hybrid approach that merges gang scheduling, GPU sharing, and a hierarchy-based queuing model, it enables a streamlined workflow. Users can submit large batches of jobs and then focus on their core tasks, knowing that the KAI Scheduler is adeptly managing resource allocation. This hands-off approach is crucial in a field where efficiency translates to innovation speed. It alleviates the tedious burden of constant manual intervention, enabling teams to direct their efforts toward more strategic pursuits.

Strategies for Optimal Resource Utilization

KAI Scheduler introduces intelligent strategies to maximize GPU and CPU usage, addressing the common pitfalls of underutilization and resource fragmentation. The bin-packing and consolidation technique ensures that smaller tasks are efficiently packed into available GPUs and CPUs. This not only optimizes compute resources but also reallocates tasks across nodes to prevent node fragmentation. Furthermore, the scheduler emphasizes workload distribution, ensuring an equitable spread that minimizes bottlenecks and maximizes throughput.

In environments where cluster resources can be hoarded, KAI Scheduler stands out with its resource guarantees. By allocating GPUs to AI teams and dynamically redistributing unused resources, it creates a culture of accountability and shared usage, amplifying overall cluster efficiency.

Simplifying Complexity in AI Integration

Integrating various AI frameworks often presents its own set of complexities. With the KAI Scheduler, Nvidia endeavors to untangle this maze of manual configurations, which can derail progress in development. The inclusion of a built-in podgrouper that automatically identifies and aligns with tools like Kubeflow, Ray, and Argo eliminates many of the cumbersome setup steps. This intrinsic capability accelerates the development cycle, reducing time to prototype and enabling teams to focus on refining their algorithms rather than wrestling with configuration headaches.

A Call for Community Engagement

The open-sourcing of the KAI Scheduler is more than a technical undertaking; it’s an invitation to the broader AI community to innovate collaboratively. By encouraging developer contributions, feedback, and shared insights, Nvidia is fostering a shared journey toward advancing enterprise AI. This initiative not only solidifies Nvidia’s standing in the tech industry but also inspires a more unified approach to tackling the challenges that AI practitioners face. The KAI Scheduler thus stands as a beacon of possibility, leading the way toward more agile and efficient AI workloads that can dynamically adapt to the burgeoning requirements of tomorrow’s innovations.